Table of contents

- Abstract

- Main points

- Things you need to know about this release

- Total public service productivity rises as growth in output outstrips growth in inputs

- Education services driving growth in public service productivity in 2015

- Total public service inputs rise again in 2015 but slower than in 2014

- Quality adjusted total public service output rises again in 2015 but slower than in 2014

- Quality remains unchanged between 2014 and 2015

- Indirectly measured service output

- What’s changed in this release?

- Links to related statistics

- Annex A: Methods for estimating output and inputs for each service area

- Annex B: Breakdown on services captured within other government services

1. Abstract

This release contains updated estimates of output, inputs and productivity for public services in the UK between 1997 and 2014, in addition to new estimates for 2015.

Productivity of public services is estimated by comparing the growth in the total amount of output with growth in the total amount of inputs used. Productivity will increase when more output is being produced for each unit of input, compared with the previous year.

Separate estimates of output, inputs and productivity are provided for:

healthcare

education

adult social care (ASC)

children’s social care (CSC)

public order and safety (POS)

social security administration (SSA)

For police, defence and other government services1, only inputs estimates are provided, as output is not easily measurable. It is therefore assumed that output is equal to the inputs used to create them and therefore productivity change in these services is zero.

Output, inputs and productivity for total public services are estimated by combining growth rates for individual services using their relative share of total government expenditure as weights.

Notes for: Abstract

- Comprises of services such as economic affairs, recreation, and housing.

2. Main points

UK total public service productivity is estimated to have risen by 0.2% between 2014 and 2015.

This is the sixth successive year of improving productivity and marks the longest consecutive period of productivity growth for total public services for which estimates are available.

Growth in 2015 was driven by growth in total quality adjusted output (1.5%), exceeding the growth in inputs of 1.2%.

Rising productivity for education and, to a lesser extent, public order and safety (POS) services provided the largest upward contributions to total public service productivity between 2014 and 2015.

The quality of overall UK public service is estimated to have remained unchanged between 2014 and 2015.

3. Things you need to know about this release

This release contains updated estimates of output, inputs and productivity for public services in the UK between 1997 and 2014, in addition to new estimates for 2015. Figures are published on a calendar year basis for consistency with the UK National Accounts.

Productivity of public services is estimated by comparing growth in the total amount of output with growth in the total amount of inputs used. Productivity will increase when more output is being produced for each unit of input compared with the previous year. Estimates of output, inputs and productivity are given both as growth rates, which show the change from the previous year, and as indices, which show the trend over time (1997 to 2015).

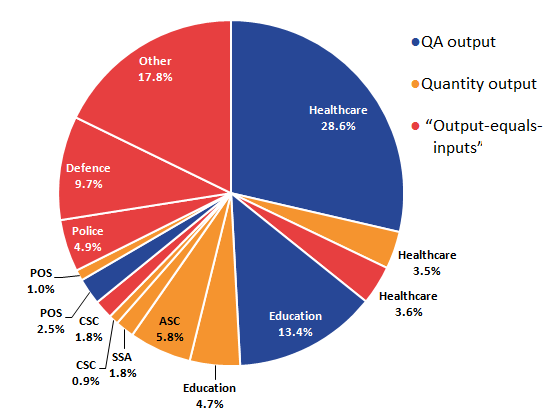

Estimated growth rates of output and inputs for individual service areas are aggregated by their relative share of total government expenditure (expenditure weight) to produce estimates of total public service output, inputs and productivity1. The contribution an individual service area makes to growth in total public services is dependent on not only the growth in that service area, but also its weight relative to total expenditure. Therefore, where service areas experience productivity growing at the same rate, a service area that accounts for a greater share of total expenditure will have a greater effect on the overall growth rate for total public services. The breakdown of expenditure between service areas, for 2014 and 2015, can be seen in Figure 1.

Service areas are defined by Classification of the Functions of Government (COFOG) rather than administrative department or devolved administration. As a result, estimates presented cannot be taken as direct estimates of departmental productivity.

Figure 1: Expenditure weights by service area, 2014 and 2015, UK

Source: Office for National Statistics

Notes:

- Sum of components may not equal 100 due to rounding.

- Other refers to other government services which includes services such as economic affairs, recreation, and housing.

- Public order and safety includes courts and probation services, prison service and fire service.

Download this chart Figure 1: Expenditure weights by service area, 2014 and 2015, UK

Image .csv .xlsInputs are composed of labour (which can either be measured directly through means such as number of staff or indirectly by measuring service area expenditure on staff2), procurement (expenditure on goods and services), and consumption of fixed capital. These inputs, as appropriate, are adjusted for inflation using a suitable deflator. Expenditure data used to estimate inputs growth are based on annually published Maastricht (MAAST) supplementary data tables. These tables are consistent with estimates of government deficit and debt reported to the European Commission under the terms of the Maastricht Treaty. They are published on a calendar-year basis and provide the required detailed breakdown by the Classification of Functions of Government (COFOG).

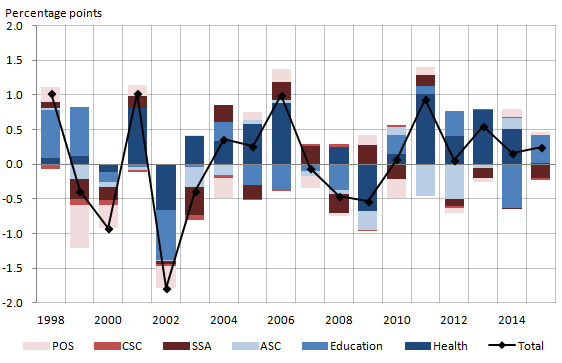

The method of measuring output, on the other hand, is done in a variety of ways, both between and within service areas as shown in Figure 2. The approaches are categorised broadly into three types.

Quantity output

Quantity output — illustrated by the light (orange) segments — represents around 17.7% of total public service output. Capturing the number of activities performed and services delivered, growth in individual activities are weighted together using their relative cost of delivery, to reflect growth in the output of the service area.

Quality adjusted output

Quality adjusted output — illustrated by the dark (blue) segments — represents around 44.5% of total public service output. Calculated in the same way as quantity output, an adjustment is subsequently applied to account for changes in the quality of the services delivered. If the quality adjustment is positive, estimates of quality adjusted output growth will increase faster than the quantity of output.

Output-equals-inputs

“Output-equals-inputs” — illustrated by the intermediate (red) segments — represents around 37.8% of total public service output. The final approach used, assumes that the volume of output is equal to the volume of inputs used to create them. This convention is used when the output of a service area cannot be measured by recording individual activities. As a result, productivity is assumed to remain constant and growth will always be zero.

Figure 2: Output-type share by service area, 2015, UK

Source: Office for National Statistics

Notes:

- Shares may not sum to 100 due to rounding.

- QA refers to quality adjusted.

- Public order and safety includes courts and probation services, prison service and fire service.

- Other refers to other government services which includes services such as economic affairs, recreation, and housing.

Download this image Figure 2: Output-type share by service area, 2015, UK

.png (23.8 kB) .xls (27.6 kB)A table summarising the methods for each service area is provided in Annex A and further information on methods is available in our public service productivity sources and methods article and quality and methodology information article.

Estimates in this release cover the UK and, where possible, are based on data for England, Scotland, Wales and Northern Ireland. Where data are not available for all four countries, the assumption is made that the available data are representative of the UK. This can happen for quality adjustment, output or inputs data.

It is important to note that while these productivity estimates provide a measure of the amount of output produced for each unit of input, they do not measure value for money or the wider performance of public services. They do not indicate, for example, whether the inputs have been purchased at the lowest possible cost, or the extent to which the desired outcomes are achieved through the output provided.

The estimates in this release have an open revisions policy, meaning that each time a new article is published, revisions can occur for the whole of the period. Further details and explanation of revisions to previous estimates can be found later on in this article.

We also produce experimental estimates of total public service productivity. The experimental estimates, while more timely, use different sources to the official estimates, containing less detail and necessarily involving a greater degree of estimation. As a result, these experimental estimates are not replacements for the official estimates presented in this article, and are intended to provide a more timely estimate for the more recent period. More detail on experimental total output, inputs and productivity can be found the Quarterly UK public service productivity (Experimental Statistics): July to September 2017 as well as in New nowcasting methods for more timely quarterly estimates of UK total public service productivity.

Notes for Things you need to know about this release

- To combine growth rates for each component, expenditure shares for the previous year are used in calculation of a Laspeyres index. For more information, see Robjohns (2006) Methodological Note: Annual Chain Linking.

- Agency staff are not captured as part of a service area’s labour inputs but are instead included within a service area's procurement inputs.

4. Total public service productivity rises as growth in output outstrips growth in inputs

In 2015, productivity for total public services was estimated to have increased by 0.2%, as output grew by 1.5 % exceeding inputs growth of 1.2 % — as shown in Figure 3. This followed a productivity rise of 0.2 % in 2014, with the output and inputs of total public service productivity growing by 1.6% and 1.5% respectively.

Figure 3: Total public service inputs, output and productivity growth rates, 1998 to 2015, UK

Source: Office for National Statistics

Notes:

- Percentage growth rate shows the year-on-year growth.

Download this chart Figure 3: Total public service inputs, output and productivity growth rates, 1998 to 2015, UK

Image .csv .xlsWhile experiencing weak growth over the period as a whole, total public service productivity has been on an upward trend in recent years, increasing consecutively over the last six years. Between 2009 and 2015, total public service productivity is estimated to have increased by 2.0% – around 0.3% growth per year. This represents the longest sustained period of growth in public service productivity since the start of the series in 1997.

Breaking down the productivity estimate into the underlying changes in inputs and output of total public services, Figure 3 also reveals that inputs and output have followed a broadly similar upwards trend. While initially experiencing an upwards trend and growing at a faster rate than output for much of the early 2000s, growth in inputs slowed over the latest decade and contracted for the first time in 2011. This coincided with spending reductions in some departments as part of the Spending Review 2010. As a result, growth of inputs has remained relatively weak between 2009 and 2015, growing on average by 0.5% per year. On the other hand, output, while experiencing relatively slower growth in recent years, has consistently outperformed inputs since 2010, growing on average by 0.8% per year.

Back to table of contents5. Education services driving growth in public service productivity in 2015

Total public service productivity grew by 0.2% in 2015, following on from a 0.2% increase in the previous year. Figure 4 shows the extent to which the different service areas have contributed to the overall change in total public service productivity in the last two years. In particular, education and healthcare services have been important factors in driving the change in the rate of growth, as together they comprise more than half of the sector.

Figure 4: Contributions to growth of total public service productivity by service area, 2014 and 2015, UK

Source: Office for National Statistics

Notes:

- Individual contributions may not sum to the total due to rounding.

- The figure does not include indirectly measured output as they do not contribute to productivity due to the assumption their "output-equals-inputs".

- Public order and safety includes courts and probation services, prison service and fire service.

Download this chart Figure 4: Contributions to growth of total public service productivity by service area, 2014 and 2015, UK

Image .csv .xlsEducation was the largest upward contributor in 2015, having made a downwards contribution in 2014 — driven in particular by a fall in its quality adjustment. This was due in part to a contraction in inputs while the output for the service area rose. In contrast to the previous year, the impact of the quality adjustment was minimal, suggesting quality remained relatively constant between 2014 and 2015.

Growth in total public service productivity was further bolstered by positive contributions from public order and safety, and healthcare services, although both to a lesser extent than in 2014.

This was partially offset by contractions in the remaining service areas’ productivity in the same year, with social security administration1 (SSA) making the largest downwards contribution to total public service productivity throughout 2015. The recent fall in SSA productivity was driven by rising inputs, while output fell again in 2015 — the service area’s sixth consecutive contraction. Some of the volatility in the series may be caused by changes in the benefits provided, affecting inputs through additional expenditure on implementation and output through changes in eligibility and thus the number of applications.

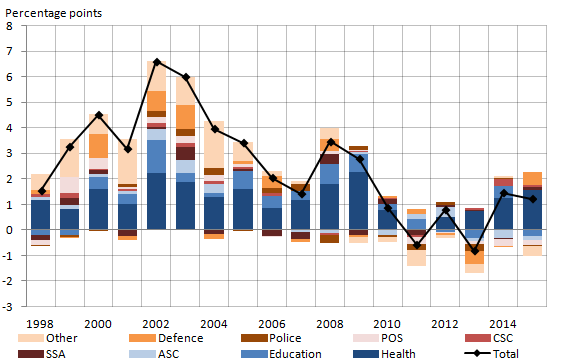

Figure 5 looks at the long-run trend in the decomposition of total public service productivity, carrying the series in Figure 4 back to 1997. It shows that public service productivity has grown consecutively over the last six years with changes across the years being driven predominantly by the healthcare and education services.

Figure 5: Contributions to growth of total public service productivity by service area, 1998 to 2015, UK

Source: Office for National Statistics

Notes:

- Individual contributions may not sum to the total due to rounding.

- The above figure does not include indirectly measured output as they do not contribute to productivity due to the assumption their "output-equals-inputs".

- POS stands for Public Order and Safety, CSC stands for Children's Social Care, SSA stands for Social Security Administration, ASC stands for Adult Social Care.

Download this image Figure 5: Contributions to growth of total public service productivity by service area, 1998 to 2015, UK

.png (21.6 kB) .xls (29.2 kB)There is no observed contribution to total public service productivity from service areas measured indirectly, because of “output-equals-inputs” convention and any growth or contraction in inputs will be matched with an equally positive or negative growth in output.

The zero contribution from these service areas has a dampening effect on total public service productivity growth, relative to their expenditure weight. Estimates for total public service productivity growth in 2015, removing police, defence and other government services2 would have risen by 0.4%.

Notes for: Education services driving growth in public service productivity

Social security administration includes some activities carried out by the Department for Work and Pensions (DWP) and activities carried out by other government departments such as the administration of tax credits and child benefits by Her Majesty’s Revenue and Customs (HMRC).

Comprises of services such as economic affairs, recreation, and housing.

6. Total public service inputs rise again in 2015 but slower than in 2014

In 2015, the volume of total public service inputs grew for the second year running, increasing by 1.2% — although this was slightly slower than the 1.5% growth in 2014. Figure 6 shows how much the different service areas contributed towards the overall change in 2014 and 2015.

In both years, healthcare was the primary driver of total inputs growth, this reflecting both the service area’s year-on-year growth (3.6% in 2014 and 4.4% in 2015) and its large size relative to total public services. Defence was the second largest upwards contributor adding 0.5 percentage points to total public service input growth in 2015. This, however, reflects more a change in data sources and methods as the financial year ending 2016 was the first year in which departments reported R&D under the new European System of Accounts 2010 (ESA 2010) definition – previously having been modelled. Other government services1 and education detracted from growth, together reducing total inputs by 0.6 percentage points.

Figure 6: Contributions to growth of total public service inputs by service area, 2014 and 2015, UK

Source: Office for National Statistics

Notes:

- Individual contributions may not sum to the total due to rounding.

- Public order and safety includes courts and probation services, prison service and fire service.

- Other refers to other government services, which comprises of services such as economic affairs, recreation, and housing.

Download this chart Figure 6: Contributions to growth of total public service inputs by service area, 2014 and 2015, UK

Image .csv .xlsFigure 7 decomposes annual total public service inputs growth from 1998 to 2015 into contributions from individual service area inputs growth and helps identify some of the main drivers behind this.

Figure 7: Contributions to growth of total public service inputs by service area, 1998 to 2015, UK

Source: Office for National Statistics

Notes:

- Individual contributions may not sum to the total due to rounding.

- Public order and safety includes courts and probation services, prison service and fire service.

- Other refers to other government services, which comprises of services such as economic affairs, recreation, and housing.

- POS stands for Public Order and Safety, CSC stands for Children's Social Care, SSA stands for Social Security Administration, ASC stands for Adult Social Care,

Download this image Figure 7: Contributions to growth of total public service inputs by service area, 1998 to 2015, UK

.png (24.6 kB)Prior to 2010, most service areas made positive contributions toward growth in total inputs — growing on average by 3.5 % per year. Since 2010, however, the picture has become more variable with all service areas, barring healthcare, making both positive and negative contributions over the last six years. As a result, average growth between 2009 and 2015 slowed to just 0.5% per year. The weakness of total public service inputs growth since 2010 has been a defining feature of recent growth in total UK public service productivity.

The accounting framework can also be re-arranged to offer a breakdown of movements in total public service inputs measured by component, as shown in Figure 8. In this format, the growth of inputs in 2015 was driven by increases in total public service intermediate consumption, contributing 1.1 percentage points, out of 1.2 percentage points of total inputs growth. This was slightly higher than the intermediate consumption components contribution in 2014 and higher than the average annual contribution since 2009 (0.5 percentage points).

Capital input continued its upward trend, contributing 0.1 percentage points to total inputs growth in 2015, while labour input made a slight positive contribution to inputs growth in the same year.

Figure 8: Contributions to growth of total public service inputs by component, 2002 to 2015, UK

Source: Office for National Statistics

Notes:

- Individual contributions may not sum to total due to rounding.

Download this chart Figure 8: Contributions to growth of total public service inputs by component, 2002 to 2015, UK

Image .csv .xlsNotes for: Total public service inputs rises again in 2015 but slower than in 2014

- Comprises of services such as economic affairs, recreation, and housing.

7. Quality adjusted total public service output rises again in 2015 but slower than in 2014

In 2015, quality adjusted total public service output grew for the second consecutive year, increasing by 1.5% — although this was slightly slower than the 1.6% growth in 2014. Figure 9 shows total public service quality adjusted output in terms of annual changes and decomposed into contributions of broad service areas. Similar to total inputs, there was a mixture of positive and negative contributions to total output in both years.

Healthcare services, again, was the main positive contributor to growth in total output in 2015, with healthcare quality adjusted output growing by 4.5% in 2015. This was partly cancelled out by a negative contribution from other government services1.

Figure 9: Contributions to growth of total public service output by service area, 2014 and 2015, UK

Source: Office for National Statistics

Notes:

- Individual contributions may not sum to the total due to rounding.

- Public order and safety includes courts and probation services, prison service and fire service.

- Other refers to other government services, which comprises of services such as economic affairs, recreation, and housing.

Download this chart Figure 9: Contributions to growth of total public service output by service area, 2014 and 2015, UK

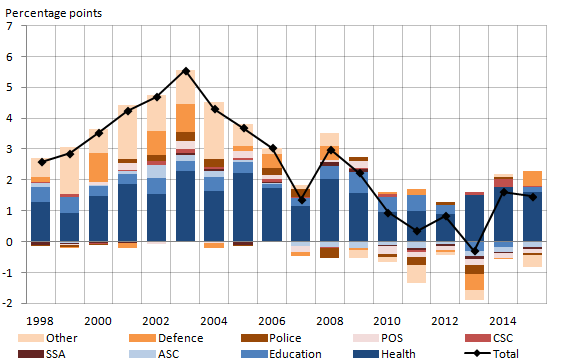

Image .csv .xlsFigure 10 looks at the long-run trend in the decomposition of total public service output, carrying the series in Figure 9 back to 1997. Here the pattern since 2009 has, much like inputs, been weak by earlier standards, although to a lesser extent. One noteworthy feature of this representation of the data is the relatively consistent, positive contributions from the healthcare service over the entire period. This chiefly reflects growth in the quantity of healthcare provided, which should be seen in the context of healthcare demands and its drivers (that is, medical advances, healthcare institutions and public expectations). A fuller analysis of healthcare productivity can be found in the article Public service productivity estimates: healthcare 2015 that contains greater detailed information on both the estimates and methods used to create them. The experience for all other services has been more variable, making both positive and negative contributions over the last six years.

Figure 10: Contributions to growth of total public service output by service area, 1998 to 2015, UK

Source: Office for National Statistics

Notes:

- Individual contributions may not sum to the total due to rounding.

- Public order and safety includes courts and probation services, prison service and fire service.

- Other refers to other government services, which comprises of services such as economic affairs, recreation, and housing.

- POS stands for Public Order and Safety, CSC stands for Children's Social Care, SSA stands for Social Security Administration, ASC stands for Adult Social Care.

Download this image Figure 10: Contributions to growth of total public service output by service area, 1998 to 2015, UK

.png (18.7 kB) .xls (41.5 kB)Notes for: Quality adjusted total public service output rises again in 2015 but slower than in 2014

- Comprises of services such as economic affairs, recreation, and housing.

8. Quality remains unchanged between 2014 and 2015

In line with recommendations from the Atkinson Review, quality adjustments are applied to the measures of output for three service areas — healthcare, education, and public order and safety (POS). This is the first year in which estimates of POS output have been quality adjusted, further details on sources and methods used can be found in the article Quality adjustment of public service public order and safety output: current method. The purpose of these quality adjustments is to reflect the extent to which the goods and services provided either succeed in delivering their intended outcome or are responsive to users’ needs, which we otherwise do not capture.

To show the effect of these quality adjustments on overall total UK public service productivity, Figure 11 compares total public service productivity with and without the quality adjustments applied.

Figure 11: Quality and non-quality adjusted total public service productivity indices, 1997 to 2015, UK

Source: Office for National Statistics

Notes:

- Percentage growth rate shows the year-on-year growth.

- QA refers to quality adjusted.

- NQA refers to non quality adjusted.

Download this chart Figure 11: Quality and non-quality adjusted total public service productivity indices, 1997 to 2015, UK

Image .csv .xlsThe indices show that between 1997 and 2015, if total public service output had not been quality adjusted and remained consistent with the national accounts, productivity would have fallen by 5.5%. Compared with quality adjusted total public service productivity, which grew by 1.1% over the same period, this suggests that the impact of overall quality in public services has been positive, with the quality of total output improving.

Figure 12 isolates this impact, showing the year-on-year growth in the quality adjusted output for total UK public services since 1998 (matching that shown in Figure 9 and Figure 10). The bars represent the contribution to changes in quality adjusted output from changes in quantity and changes in quality. Holding other factors constant, improvements in the quality of goods and services provided would cause the series to increase — as total non-quality adjusted output remains constant while quality rises.

Figure 12: Contribution to quality adjusted output growth by component, 1998 to 2015, UK

Source: Office for National Statistics

Notes:

- Components may not sum to total due to rounding.

- QA refers to quality adjusted.

- NQA refers to non quality adjusted.

Download this chart Figure 12: Contribution to quality adjusted output growth by component, 1998 to 2015, UK

Image .csv .xlsWhile quality adjusted output has been broadly positive throughout the period, contracting only in 2013 and averaging around 2.5% per year since 1997, the quality adjustment component has been somewhat more varied. During much of the period, quality grew, averaging around 0.6 percentage points per year between 1997 and 2011, as shown in Figure 12. Then, between 2011 and 2014, the overall quality in total public service output fell and had a negative contribution to total public service quality adjusted output. In 2015, the overall quality in public services is estimated to have remained unchanged and having a zero contribution to growth in quality adjusted total public service output.

Figure 13 expands on Figure 12, decomposing the contribution of total quality adjustment into the changes in quality by service area. From this, we can observe the experience of each service area and the extent to which they have contributed to overall change in total public service quality adjusted output.

Figure 13: Contribution to quality adjusted output growth by component and service area, 1998 to 2015, UK

Source: Office for National Statistics

Notes:

- Components may not sum to total due to rounding.

- Public order and safety includes courts and probation services, prison service and fire service.

Download this chart Figure 13: Contribution to quality adjusted output growth by component and service area, 1998 to 2015, UK

Image .csv .xlsFor healthcare, the impact of its quality adjustment has been chiefly positive, with some variation in size of effect, contributing upwards in all years, with the exception of 2001. The POS quality adjustment, on the other hand, has made upwards contributions to the rate until 2010, but since then has made consecutively downwards contributions. This was due largely to the negative impact of the prison safety adjustment, reflecting increases in the number of reported self-harm and assault incidents in prisons.

The education quality adjustment has been the largest contributor to overall public service quality. Prior to 2012 it was positive, adding 0.4 percentage points per year to growth in total quality adjusted output between 1997 and 2011. However, its experience in recent years has differed, acting as the main driver of decline of overall quality between 2012 and 2014.

Caution should, however, be used when interpreting the change in the quality adjusted output trend of education from 2008 onwards. Over this period, there have been changes to the examinations, which count towards school performance statistics in England that influenced both the number and type of examinations sat by some pupils. As a result, some of the change in attainment statistics used for quality adjustment may not be entirely caused by changes in the quality of education provided. In particular, changes to school performance tables announced by the Department for Education – as a result of Professor Alison Wolf’s review of vocational education (PDF, 2.79MB) – limited the size and number of non-GCSEs that counted towards performance, which impacted on attainment statistics from academic year 2012 to 2013 (Revised GCSE and equivalents results in England, 2013 to 2014 (PDF, 1.42MB)).

Further information on the methods of quality adjustment and their impact on estimates of output and productivity for healthcare (PDF, 329KB), education and public order and safety.

Back to table of contents9. Indirectly measured service output

For service areas where it is difficult and complex to estimate the quantity of output (due to the lack of market transactions and/or the services are collectively consumed) it is assumed that the volume of output in a given year is equal to the volume of inputs used in producing them. This is known and referred to as the “output-equals-inputs” convention and, as a by-product, estimates that productivity growth for these service areas is zero. This applies to the police, defence and other government services1, which combined account for 32.3% of total public service expenditure in 2015.

In addition to police, defence, and other services, the “output-equals-inputs” convention is also applied to approximately 10% of healthcare output (capturing services delivered by non-NHS providers). Children’s social care (CSC) output relating to non-looked after children (66% of all CSC output) is also measured indirectly using the volume of inputs to those services. Together these indirectly measured outputs account for a further 5.5% of total expenditure.

These zero contributions to productivity growth from indirectly measured or “output-equals-inputs” service areas limit the growth in total public service productivity. The extent to which they affect growth of total public service productivity is proportional to their share of total expenditure. The proportion of total expenditure for indirectly measured service area output has been steadily decreasing over the period observed, from 43.7% in 1997 to 37.8% in 2015. This has been driven chiefly by defence’s share shrinking. The impact of indirectly-measured output on total public service productivity has therefore diminished over the time series.

Notes for: Indirectly measured service output

- Comprises of services such as economic affairs, recreation, and housing.

10. What’s changed in this release?

In line with the published revisions policy (PDF, 58KB), revisions have been made to estimates in this release for all service areas due to:

improved methods resulting from the ONS development programme for public service productivity

revisions made to data by data providers

the replacement of projected data with actual data

re-estimation of forecasts and backcasts

All estimates, by definition, are subject to statistical “error”, but in this context, the word refers to the uncertainty inherent in any process or calculation that uses sampling, estimation or modelling. Most revisions reflect either the adoption of new statistical techniques, or the incorporation of new information, which allows the statistical error of previous estimates to be reduced.

Public service productivity estimates operate an open revisions policy. This means that new data or methods can be incorporated at any time and will be implemented for the entire time series. As this article is produced using more timely data it involves a degree of estimation where data are incomplete.

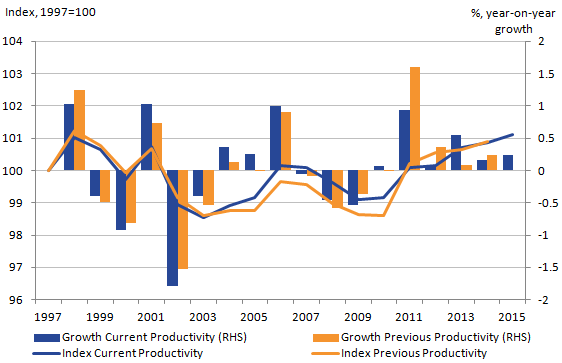

Figure 14 shows the combined effect of the changes in data and methodology relative to the previous publication. These revisions suggest that total public service productivity was unchanged in 2014, growing by 0.2%.

Figure 14: Revisions to growth rates and indices of total public service productivity from previously published estimates, 1997 to 2015, UK

Source: Office for National Statistics

Notes:

- RHS stands for right-hand side.

- Percentage growth rate shows the year-on-year growth.

Download this image Figure 14: Revisions to growth rates and indices of total public service productivity from previously published estimates, 1997 to 2015, UK

.png (19.4 kB) .xls (18.9 kB)Comparing over the entirety of the time series, Figure 14 also shows that, while experiencing changes in individual years, the overall experience was unchanged, growing on average by 0.1% per year and being 0.9% higher in 2014 compared with 1997.

The largest revisions, as shown in Figure 15 are to the 2011 and 2013 growth rates, due partly to revisions in education inputs, downwards and upwards respectively.

Figure 15: Revisions to total public service productivity growth rates, 1998 to 2014

Source: Office for National Statistics

Download this chart Figure 15: Revisions to total public service productivity growth rates, 1998 to 2014

Image .csv .xlsIncorporation of quality adjustment for public order and safety (POS) output

In line with recommendations from the Atkinson Review , a quality adjustment is applied to measures of public service output where appropriate. Estimates in this release mark the first year in which estimates of public order and safety (POS) output have been quality adjusted.

Within the POS service area there are four main components: fire-protection, courts (which itself has five further sub-components), probation and prisons. Quality adjustments are applied to the components associated with the criminal justice system (CJS) to take account for changes in quality and improvements in associated outcomes. No quality adjustment is applied to fire-protection or county courts services, which deliver civil cases. This is as these services are deemed to have different outcomes to the criminal justice elements of POS.

The criminal justice quality adjustment has itself four components. The first relates to achieving an overall outcome of the service (the recidivism adjustment) and the remaining three relate to specific target outcomes for subcomponents of the CJS:

recidivism adjustment

prison safety adjustment

custody escapes adjustment

courts’ timeliness adjustment

As a result of these changes, estimates of the service area’s output have experienced revisions throughout the whole time series. However, as the “criminal justice” element of public order and safety has a very small weighting relative to total UK public services (2.5% in 2015), the overall impact to quality-adjusted total public service output has been positive but marginal.

Further information on the sources and methods used, as well as the impact on public order and safety output, can be found in ONS’s articles Quality adjustment of public service public order and safety output: current method and Quality adjustment of public service criminal justice system output: experimental method.

Change to children’s social care output

Within the previous year’s release (Public service productivity estimates: total public service, UK, 2014) it was noted that as a result of different data sources being used, children’s social care (CSC) inputs and output were misaligned, in particular with reference to the service area’s indirectly measured output.

For background, CSC output is calculated in two parts: looked after children (LAC) and non-looked after children (non-LAC). A child is classed as looked after (LAC) by a local authority if a court has granted an order to place them in care, or a council’s children’s services department has cared for the child for more than 24 hours. A child is classed as non-looked after (non-LAC) if they are not taken out of their home environment but are being monitored. While output for LAC is directly measured, non-LAC is indirectly measured using deflated expenditure on CSC services provided by the respective countries’ administrations.

For this year’s publication, a new experimental method of measuring output associated with non-LAC has been used, causing these estimates to be inconsistent with the UK National Accounts. The purpose of this being to better align what is captured within CSC output and inputs. This is achieved overall by classifying all expenditure that is not used to provide services for LAC, as expenditure that is used in providing services for non-LAC.

While varied year-to-year, over the whole period (1997 to 2015), average growth in CSC output was revised up by 0.1 percentage points from 2.3% to 2.4%. Given this and the service area’s relatively small size — accounting for 2.8% of total expenditure in 2015 — the impact of this change on quality-adjusted total output is negligible.

Composite intermediate consumption deflator methods change

For a number of service areas, the volume of intermediate consumption inputs is estimated by deflating the associated expenditure by a suitable composite deflator. This deflator is a chain-linked Paasche price index composed of a weighted basket of price indices specific to the service area, these price indices often being proxies due to limited reported actual price data.

The composite deflators used in this year’s publication have experienced revisions due to improvements in both the implementation of the methodology and data used to produce them. In line with recommendations from the Johnson Review (PDF, 175KB), RPI (Retail Prices Index) indices have been replaced with CPI (Consumer Prices Index) indices, where it is deemed appropriate. Similarly, new deflators have been matched to the different types of expenditure where previously a generic deflator was used. Secondly, the time series of the expenditure breakdown, taken from the Subjective Analysis Returns (SAR) (which is an extension of the subjective analysis in the Local Authority General Fund Revenue Account Outturn suite), has also been expanded. This reduces the amount of estimation that is made concerning the weights given to the different deflators. Finally, the method used to deal with changes in the reporting of the expenditures has been made consistent across the service areas that use them.

As a result of the aforementioned improvements, the impact of the new composite intermediate consumption deflators differs between service areas, however, overall the effects on total inputs is minimal.

Calendarisation method of data reported in financial and academic year

In order to convert reported data into calendar year, a technique known as cubic splining splits annual financial/academic year volume or spending measure into quarterly data, which are then re-aggregated to create calendar year figures. For this year’s publication, the method used has been updated, where possible, from X-11 ARIMA to X-13 ARIMA (PDF, 1.80MB) in line with latest national accounts practices. X-13 ARIMA has improved methods for forecasting and back casting data as well as interpolating a trend within a data series.

As a result of incorporating this change, some of the annual growth rates have changed as the volume of output and inputs calculated from reported data has been apportioned between calendar years in a slightly different manner. However, the overall effect is minimal.

Revision to healthcare quantity output

It should be noted that the quantity output figures included in this release differ from figures in the UK National Accounts, The Blue Book. This is partly because of:

the incorporation of a number of methods improvements affecting output data in this release for each of England and Wales between financial year ending (FYE) 2013 and FYE 2015

differences in the deflator used to calculate non-NHS output growth

the incorporation of an updated method for converting financial year data into calendar years for the data in this release

The methods improvements affecting output data for England and Wales are intended to be incorporated into the June edition of the Quarterly National Accounts and into the 2018 Blue Book. These differences are noted in the December edition of the Quarterly National Accounts and more information on the revisions is available in Public service productivity estimates: healthcare 2015. All else being equal, the indicative impact of these changes on annual chained volume measure GDP growth is no greater than 0.1 percentage points.

Improved reporting of academies in education labour inputs

The majority of education labour input is measured directly using teacher and school support staff numbers and average salaries disaggregated into occupational groups such as head teachers, classroom teachers and teaching assistants. A small proportion of labour input is measured indirectly using deflated expenditure. This relates to things such as administrative work carried out by staff in central government bodies.

Along with maintained schools, the labour input of academies is measured directly using data on the number of staff and average salaries. However, academy staff had previously not been included in the direct labour calculation prior to 2010 and average salaries had been assumed the same as those for maintained schools. This was as there were no data available.

We now have access to data for both academy staff numbers prior to 2010 and average salaries for academy staff over the entire period. For this article, these have been combined with existing estimates. This provides a more realistic trend in the education labour volumes and is a more accurate estimate of academies’ inputs compared with that previously estimated.

To then combine the growth rate in academies’ labour with the other labour component, to generate an overall labour inputs growth rate, they are weighted by their share of total labour expenditure.

This has also been improved for academies as, previously the weight for academies’ labour input had used the total implied expenditure on academies’ labour (number of staff multiplied by average salary). To better reflect academies’ expenditure share, implied academies’ expenditure relative to local authority labour expenditure is used to proxy the reallocation of spend associated with academy labour. In doing so it allows academies to be treated consistently with maintained schools.

Back to table of contents12. Annex A: Methods for estimating output and inputs for each service area

This table provides an overview of the methods for estimating output and inputs for each service area to enable users to compare quickly how estimates for each service area are derived.

Table 1: Table of methods

| Output | Inputs |

|---|---|

| Healthcare | |

| Quantity of delivered healthcare services including hospital and community health services, family health services and GP prescribing combined as cost weighted activity index. Non-NHS provision uses “output = inputs” convention. Adjusted for quality of delivered services including survival rates, health gain, waiting times and results from the National Patient Survey. | Direct measure of volume growth for labour inputs based on full-time equivalent employee numbers in the health service. Indirect measure of volume growth for goods and services and capital consumption dividing current price expenditure by appropriate deflators. Individual growth rates multiplied by previous year expenditure shares to give chain-linked Laspeyres volume index of total inputs. |

| Education | |

| Quantity of full-time equivalent publicly funded pupil and student numbers in pre-school education, maintained primary, secondary and special schools, and further education colleges, adjusted for attendance combined as cost weighted activity index. Adjusted for quality using change in average point scores at GCSE or equivalent level. | Direct measure of volume growth for local authority labour inputs based on full-time equivalent teacher and support staff numbers adjusted for hours worked. Indirect measure of volume growth for central government labour, general government goods and services and general government capital consumption by dividing current price expenditure by appropriate deflators. Individual growth rates multiplied by previous year expenditure shares to give chain-linked Laspeyres volume index of total inputs. |

| Social security administration | |

| Chain volume measure based on aggregation of output from administration of individual benefit types weighted by associated unit costs. Not quality-adjusted. | Current price expenditure on labour, goods and services and capital consumption divided by appropriate deflators to give estimated volume of inputs. Individual growth rates multiplied by previous year expenditure shares to give Laspeyres volume index of total inputs. |

| Adult social care | |

| Quantity of social services activities measured in terms of time or number of items combined as cost-weighted activity index. Estimates for 2014 and 2015 have been estimated using Holt-Winters forecasting on historical data, as changes in the data source have prevented measurement of output that is consistent with previous years. Not quality-adjusted. | Current price expenditure on labour, procurement for independent care, other procurement and capital consumption divided by appropriate deflators to give estimated volume of inputs. Individual growth rates multiplied by previous year expenditure shares to give Laspeyres volume index of total inputs. |

| Children’s social care | |

| Quantity of looked after children as cost weighted activity index. Non-looked after children are indirectly measured using deflated expenditure. Looked after children and non-looked after children combined using expenditure shares to give cost-weighted volume index. Not quality-adjusted. | Current price expenditure on labour, goods and services and capital consumption divided by appropriate deflators to give estimated volume of inputs, separately for publicly and independently provided care. Individual growth rates multiplied by previous year expenditure shares to give Laspeyres volume index of total inputs. |

| Public order and safety | |

| Individual cost-weighted activity indices for the fire protection service, the prison service, the courts and the probation service combined using expenditure shares to give cost-weighted volume index. Elements associated with the Criminal Justice System are adjusted for quality using change in a suite of outcome related indicators. Other areas (such as fire protection services) are not quality-adjusted. | Current price expenditure on labour, goods and services and capital consumption – separately for the courts, the fire service and the prison service – divided by appropriate deflators to give estimated volume of inputs for each component. Individual growth rates multiplied by previous year expenditure shares to give Laspeyres volume index of total inputs. |

| Police | |

| “Output-equals-inputs” convention. | Current price expenditure on labour, goods and services and capital consumption divided by appropriate deflators to give estimated volume of inputs. Individual growth rates multiplied by previous year expenditure shares to give Laspeyres volume index of total inputs. |

| Defence | |

| “Output-equals-inputs” convention. | Total current price expenditure divided by derived deflator to give estimated volume of inputs, converted to index. |

| Other | |

| “Output-equals-inputs” convention. | Total current price expenditure of all included service areas divided by GDP deflator to give estimated volume of inputs, converted to index. |

Download this table Table 1: Table of methods

.xls (31.7 kB)13. Annex B: Breakdown on services captured within other government services

This table provides a more detailed breakdown on services captured within other government services, as well as the proportion of other government service that they account for as of 2015.

Table 2: Breakdown of other government service expenditure

| Other government service area | Share of Expenditure (%) |

|---|---|

| General government services | 25.3 |

| Economic affairs | 31.8 |

| Recreation, culture & religion | 15.4 |

| Environmental protection | 12.7 |

| Housing & community amenities | 9.4 |

| Other | 5.4 |

| Source: Office for National Statistics | |

| Note: | |

| 1. Sum of components may not equal 100 due to rounding. |