1. Main points

- Non-official sources are an increasingly useful resource, important for fulfilling Sustainable Development Goals (SDGs) reporting commitments and promoting inclusion and sustainability.

- It is essential to have a clear and transparent protocol for assessing non-official sources for use in UK SDGs reporting.

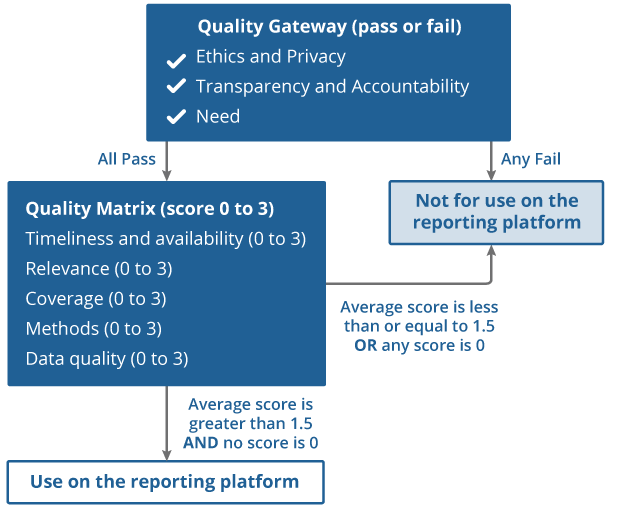

- We present a protocol with an initial “pass or fail” gateway on key criteria, followed by a quality assessment using a simple scoring system.

- This protocol is aligned with official quality guidance and the three pillars of the UK Code of Practice for Statistics: trustworthiness, quality, and value.

- This protocol provides transparency on the method used for assessing non-official sources for SDGs reporting.

- The target audience are non-governmental organisations that may produce statistics relevant to SDGs, and any organisation working with SDGs data.

- While the protocol is tailored to SDGs reporting, we welcome others to adapt it for assessing non-official sources in other contexts.

2. The Sustainable Development Goals and sources

The Sustainable Development Goals (SDGs) are central to the United Nations (UN) 2030 Agenda, which covers people, planet, prosperity, peace, and partnership. There are 17 goals, covering environmental, social, and economic issues, with 169 targets and 247 indicators across these.

As the United Kingdom (UK) national statistical institute, the Office for National Statistics (ONS) has a remit to monitor and report on the SDGs for the UK through our National Reporting Platform (“the Platform”).

We collect robust, quality-assured data against the methodology set by the UN, also adhering as closely as possible to the UK Statistics Authority (UKSA) Code of Practice (“the Code”). You can find more details on the UK’s approach to SDG reporting on the information and publications pages on the Platform.

Value of non-official sources

By mid-2021, the UK had achieved 80% coverage for reporting across the 247 SDG indicators. The breadth of the SDGs and the required breakdowns make it increasingly challenging to achieve much greater reporting coverage though official sources alone.

The UN Economic Commission for Europe (UNECE) task force on the Value of Official Statistics recognises the increased demands for data and associated challenges for official statistics. In line with addressing such demands, the ONS is a key partner of the Inclusive Data Charter (IDC). This is a global initiative for improving and strengthening data disaggregation to help ensure no one and nowhere is left behind. This includes a commitment to using as wide a range of data sources as possible. There is an increasing recognition of the importance of inclusive data in official statistics. This is reflected in the work of the Centre for Equalities and Inclusion at the ONS, and the Inclusive Data Taskforce established in October 2020 by the National Statistician. All of these initiatives work with official and non-official stakeholders to improve data inclusivity.

The ability to consider alternative sources can help us to increase the granularity of SDG indicator reporting and so provide an even more complete picture. This protocol seeks to maximise the benefits from non-official sources in the SDGs context while minimising risks. We are committed to publishing further high-quality data for SDGs, and this protocol will support the ONS and potential non-official source providers in ensuring we report in more detail in future.

Non-official sources: what is in scope?

In this protocol, we use “non-official source” to mean statistical outputs from non-official producers. This adapts the UKSA’s statistical classification of official and non-official statistics, since both may come from an official producer.

More specifically, a “non-official source” is:

An output that does not come from a UK governmental department or government-related body, local or devolved authority, or an official international reporting body.

This definition builds on the UKSA outline for types of official statistics and their producers. The Statistics and Registration Service Act (SRSA) 2007 lists government departments, the devolved administrations, ministerial authorities, and other persons acting on the Crown’s behalf as “official statistics producers”. The list of ministerial and non-ministerial departments provides useful general guidance on UK official statistics producers. This list is not exhaustive, as there are more official statistics producers across the UK nations.

This protocol applies primarily to statistical outputs from organisations other than government, such as charities, businesses and academic institutions. Out of scope are any statistics from official (government) UK producers and international entities like UN agencies and Eurostat. This is because sources from these producers already have formal guidance and principles, with a level of transparency that allows for straightforward quality assessment against the ONS’ standards. More detail on what is out of scope can be found in Section 4.

SDG platform sources hierarchy

The SDG indicator framework has a mixture of statistical and policy-based indicators, with the majority (85%) being statistical. Policy indicators usually require information from official bodies, so non-official sources are unlikely to be appropriate. For example, indicator 13.2.1 requires evidence for available policies on national adaptation plans. Our UK reporting currently provides information for all but one policy indicator.

For statistical indicators we prioritise quantitative (statistical) information over policy information. We may also reference relevant official documents to give more context to existing statistical reporting, or to provide relevant information while we work to identify appropriate statistical data sources.

We use a hierarchy to prioritise between different sources, particularly where these are of similar quality. This has three levels.

Level 1 (preferred)

Official statistics, including those classified as “National Statistics”, policies, or research from official producers. Such sources fully meet officially recognised quality standards and come from trusted official producers governed by formal regulations. For statistics, these are fully compliant with the UKSA Code, and official government research follows the Government Social Research publication protocol.

Level 2

Other statistical or qualitative information from official producers. These sources are expected to be produced reliably and with any quality issues clearly highlighted. These may be statistics that come from trusted official providers though may not be fully compliant with the Code and therefore are not badged as a National Statistic or an official statistic. This level includes international sources such as Eurostat or UN agencies (as outlined above), and official guidelines, frameworks, and reports.

Level 3

Statistical information from non-official producers. These are subject to assessment with this protocol. Assessment is required even if the non-official source has confirmed voluntary compliance with the Code and is on the list of voluntary adopters. This is a scheme for non-official statistics led by the Office for Statistics Regulation (OSR), but it is not formally regulated. Non-official voluntary adopters are likely to have a very good chance of passing the protocol with a high score.

This protocol focuses on level 3, but sources at each level go through robust quality assurance processes prior to inclusion on the Platform.

We are also considering the potential of including non-official sources of qualitative information in the hierarchy of future iterations of this protocol. This might enable use of articles or reports from non-official sources to fill indicator gaps as a proxy, or to provide additional context. We occasionally use proxy indicators where we have suitable data that may not fully align with the UN requirements for the indicator. For example, there may be partial coverage (England only, rather than UK-wide) or a slight difference in classification.

In addition to the three-level hierarchy, we will prioritise any non-official sources that could potentially fill remaining headline indicator gaps. There is a high number of potential breakdowns that could be reported across the SDG indicators. For these separate disaggregation gaps, we will prioritise non-official source assessment for a set of disaggregations. We will outline these priorities in our annual SDG reports or otherwise publish them.

In exceptional circumstances, a non-official source could replace an official source, for example, where the non-official source is judged to be more suitable (using this protocol) than an official source that provides a proxy for the indicator.

Assessing sources: a two-stage process

The protocol assessment has two stages (Figure 1 provides a visual representation).

Quality Gateway

The Quality Gateway has a pass or fail on the requirements of ethics and privacy, transparency and accountability, and need. All requirements must be met to proceed to the second stage, otherwise the source is not considered appropriate for use on the Platform at this time.

Quality Matrix

The Quality Matrix has a pass threshold based on an average score. The source is scored from 0 to 3 on a range of criteria: relevance, methods, coverage, timeliness and ongoing availability, and data quality. A source with an average score of over 1.5 and with no individual criterion scoring 0 is considered suitable for including on the Platform.

Figure 1: Flow diagram for assessment of non-official statistical sources

Source: Office for National Statistics

Download this image Figure 1: Flow diagram for assessment of non-official statistical sources

.png (46.5 kB)The assessment at each stage is undertaken in parallel with consultation of the Code, as the scoring specifically references some of its principles. A non-official source is almost by definition not fully aligned with the Code, but this protocol satisfies the most critical and relevant sections. The scoring also takes into account elements of the ethics self-assessment tool recommended by the National Statistician’s Data Ethics Advisory Committee (currently known as the Centre for Applied Data Ethics). The quality dimensions in the protocol are also aligned where possible with the Government Data Quality Framework, and UKSA’s Questions for Data Users guidance. We recommend users refer to the core data quality dimensions in the Government Data Quality Framework for specific assessments of dataset quality.

The structure of the Quality Matrix scoring system is adapted from a model (unpublished) originally developed by Statistics Netherlands’ SDG team, which was based on previous work by Eurostat.

Given SDG indicator reporting requirements, the assessment’s quality criteria tend to be SDG specific and reference the UN SDG metadata. For example, the timeliness and ongoing availability criteria are aligned with the UN 2030 Agenda timeframe, giving the highest score to sources available from at least 2015, when the SDGs were launched. The UN typically specifies the methodology for each indicator, so we need to have information on the methods behind any non-official source used on the Platform. We hope that this protocol can be adapted for assessing non-official sources in other contexts and we encourage users to inform us if they plan to do so.

Communication and engagement with potential providers are essential, and any source that does not pass either stage of the assessment can potentially be included in future. We will look to support providers to strengthen the quality of their sources wherever possible. This could include encouraging and supporting commitment to the Voluntary Application of the Code, or improving metadata for the source.

Back to table of contents3. Detailed source assessment process

The guidance approach for each stage is outlined below, and is meant to be used together with the UK Statistics Authority (UKSA) Code of Practice (“the Code”). Figure 1 gives a visual representation.

Stage 1: Quality Gateway

A source must pass on all three of the following criteria to proceed to the Quality Matrix scoring stage.

Ethics and Privacy

Pass: either there are no ethical concerns, or any concerns are fully documented and actions are in place to minimise identified risks. Fully compliant with all parts of the Code principle T1 (honesty and integrity) and T6 (data governance). Use is in line with the terms and conditions of the source. Privacy policy is compliant with the General Data Protection Regulation (GDPR) for the UK and the Data Protection Act 2018.

Fail: significant ethical concerns without any mitigations or considerations. Not compliant with all parts of principle T1 and T6 of the Code. The source’s terms and conditions prevent use of the data as required. Not compliant with GDPR and/or the Data Protection Act 2018. Any of these conditions would lead to a fail.

Transparency and Accountability

Pass: source meets principles T4.1 and T4.5 of the Code, ensuring processes for all parts of the data journey are transparent. If metadata information is not already in the public domain, permission must be granted to place this in the public domain.

Fail: source does not meet T4.1 and T4.5 of the Code. Source may not be fully transparent about any data quality issues or there is no metadata available.

Need

Pass: there is a clear identified need for the source, either due to a headline data gap or a priority disaggregation gap (see sources hierarchy). Alternatively, the source may be a better fit than a source already being used on the Platform. Sources suitable for non-priority disaggregations may be considered if all priority gaps have been filled and there is sufficient resource for additional assessment.

Fail: the proposed source does not fill a headline gap. The source is unlikely to improve on information already on the Platform.

Stage 2: Quality Matrix

Each criterion is scored, and an average score calculated. A source with average score over (but not equal to) 1.5 and no 0 scores passes.

Timeliness and ongoing availability

Score 3 (high): source is sufficiently current to be informative, with a time series from at least 2015 and no time lag greater than 15 months for annual data, or 6 months for monthly data. A wider lag of 2 years is acceptable when the impact of any statistical change may take longer to be observed, for example, for some environmental statistics. No gaps (missing data) in the time series. The source is expected to be regularly updated and available in the future. There must be a record of previous data points (the source provides a time series).

Score 2 (medium): source is sufficiently up to date to be informative, with a time lag no greater than 2 years (3 years for statistical changes that may take longer to be observed, such as some environmental statistics). There are no gaps in the time series and there must be a record of previous data points (the source provides a time series). New timely sources without previous data points that are expected to be updated and available in the future would be included

Score 1 (low): source is older than 2 years (3 years for statistical changes that may take longer to be observed, such as some environmental statistics), but is still meaningful in the social, environmental, or economic context of the indicator. The time series may have gaps or only one data point has been provided.

Score 0 (not acceptable): source is too old to be meaningful, with the latest data point(s) before 2015, or has too great a time lag, or no reasonable expectation of future updates. The source does not provide access to existing historic data (time series).

Relevance

Score 3: close match with United Nations (UN) Sustainable Development Goals (SDG) metadata, or gives more detail than the metadata requires. Fully compliant with the Code principles Q1.1 and Q1.5 on suitable data sources. Disaggregations specified in the SDG indicator title are reported as a minimum, potentially supported by additional Inclusive Data Charter (IDC) disaggregations.

Score 2: partial match with UN metadata or disaggregation referenced in indicator title. Fully compliant with the Code principles Q1.1 and Q1.5. May enable reporting of additional disaggregations in the IDC.

Score 1: does not fully report the indicator but is an appropriate proxy relevant to the UK national context. Fully compliant with the Code principles Q1.1 and Q1.5. May enable reporting of relevant disaggregations.

Score 0: does not align well with the metadata for the indicator or provides no appropriate proxy for headline or relevant disaggregation gaps.

Coverage

Score 3: data robustly and reliably measures the entire UK population or the entire UK geography (as appropriate to the indicator). Additional breakdowns for all UK nations may also be available.

Score 2: metadata is clear and transparent about the identifiable population covered or not covered. The population is a suitable representation for reporting the indicator, though may not cover the entire UK population or geography. There may be data for some, but perhaps not all, UK nations.

Score 1: metadata is available on the identifiable population covered, but the limitations of partial coverage may not be fully considered by the source producer. The population is adequate for reporting the indicator, even if this could potentially be improved.

Score 0: metadata is unclear about the identifiable population or coverage is not specified. The population may be of too limited representation or the sample too small to be appropriate for reporting the indicator.

Methods

Score 3: internationally comparable, in line with the UN metadata, the methods are appropriately applied, fit-for-purpose and transparently described. Fully compliant with the Code principle Q2 (sound methods) by using the best available methods openly.

Score 2: the methods are appropriately applied, and well described. Largely compliant (compliant with half or more of the principles) with the Code principles under Q2, including Q2.3 and Q2.4.

Score 1: methodology described in detail and transparent. Methods are justifiable, but might be lacking scientific proof, or alternative methods may be available that might produce improved results. Largely compliant with the Code principles under Q2.

Score 0: methodology is neither described nor justified.

Data quality

Score 3: data validation procedures are outlined. It is clear how the data were collected and (if relevant) pre-processed. Largely compliant with standards in the Code principle Q3 (assured quality), specifically outlining aspects of accuracy and reliability.

Score 2: compliant with principle Q3.2 from the Code – transparency about the quality assurance approach taken throughout the preparation of the statistics, any issues with quality of the data and statistics are transparently outlined.

Score 1: some basic checks have been conducted and accuracy and reliability of the data source can be established, but no formal quality assurance available.

Score 0: no information on data quality or quality assurance of the statistics.

Back to table of contents4. What is out of the scope and why

This protocol does not cover statistics from official producers, even where those statistics are not classed as a “National Statistic” or an “official statistic”. Appropriate checks are incorporated into our National Reporting Platform’s (“the Platform”) reporting processes regardless of the source. All information goes through a quality assurance process and approval by the topic expert – usually the data provider or a government body contact with the necessary expertise.

Statistics from recognised official international bodies are also out of scope. The Sustainable Development Goals (SDGs) are a global initiative, so there are a range of international organisations collecting, validating, and disseminating SDG-related data that may be included on the Platform. There are formal regulations and quality checks for statistics published by such organisations

The UN Statistics Division (UNSD) and other international agencies are covered by the Committee for the Coordination of Statistical Activities. Data from these producers may be used on the Platform, for example, World Health Organisation data on concentrations of fine particulate matter. If the data originates from non-official sources, they can be classed as pre-approved and do not need to pass this protocol because they should be compliant with the UNSD’s Principles Governing International Statistical Activities. These principles incorporate compliance with the Fundamental Principles of Official Statistics and the Recommended Practices on the Use of Non-Official Sources in International Statistics. Eurostat is covered by the European Statistics Code of Practice.

This protocol does not cover raw input data (microdata). Guidance on expected quality standards of such raw datasets is provided by the Office for Statistics Regulation’s (OSR) Quality Assurance of Administrative Data tool and the Government Data Quality Framework. There are cases where the underlying raw data of a non-official source may be suitable for performing analyses related to an SDG indicator. In these cases, we contact the producer to discuss the possibility of obtaining the data and publishing a statistical analysis of it. Alternatively, we may collaborate with the producer to publish the statistics for the Platform. In both cases, the end result must pass the non-official protocol.

Back to table of contents5. Limitations and considerations

The protocol is designed by the Sustainable Development Goals (SDGs) team at the Office for National Statistics (ONS) to enable wider use of sources on our National Reporting Platform (“the Platform”). This procedure is not regulated by a formal body, such as the Office for Statistics Regulation (OSR), but we have informed and consulted OSR representatives. While there are real benefits from enabling non-official sources to be used for SDG indicator reporting, there are also risks and limitations.

We recognise that there is some room for interpretation in this protocol. The methodology is less prescriptive for lower scores on the individual components of the Quality Matrix stage because the SDGs cover such a wide range of topics that the criteria need to be flexible. When using this protocol in practice, the ONS SDG team will carry out consistency checks and apply escalation routes in the case of any disagreements.

In producing this protocol, we have considered several limitations and risks, and attempted to mitigate these.

Scope

A risk is that the scope of the protocol is so wide that the Platform becomes an extensive or unmanageable library of statistical sources. Our resources are limited, so we have mitigated this by providing a hierarchy with different conditions for considering headline and prioritised disaggregation gaps.

Trading-off between criteria

There is a potential limitation that the scoring system allows sources to “trade off” areas of weakness, rather than addressing them. The requirement to pass the Gateway and discounting any source with a 0 score helps to mitigate this. We have tested combinations of scorings for any obvious trade-offs between the criteria. While we have not weighted the criteria, it is something we may consider in future development. We hope that the assessment will enable us to engage with potential producers to improve their sources.

Endorsement and neutrality

A possibility is that non-official source producers or other stakeholders perceive inclusion on the platform as the ONS’ endorsement, with heightened risks where sources are perceived as controversial. We state that where a source meets the criteria for inclusion on the Platform, this does not constitute a formal endorsement by the ONS. Inclusion would not confer any kind of official status. The Gateway stage also ensures that sources with an overt political agenda or conflicts of interest cannot be included.

Official sources quality

A risk is that some official sources might not pass the protocol if it was applied to them, so they should also be assessed. This protocol was produced because there is no existing guidance for non-official sources. We have tested the assessment on statistics used on the Platform from a range of official sources and they have all passed. While it is possible for an official source from the hierarchy level 2 to score low on some of the protocol criteria, we have quality assurance processes that apply to all data we report. This includes the ONS and UK Statistical Authority (UKSA) issued guidance, and adherence to the UN-specified metadata where possible.

Scoring precision

A limitation is that the cut-off threshold and scoring criteria are very specific, so potentially useful statistics do not pass for inclusion on the Platform. The minimum individual criterion pass score of 1 is generally less specific and allows for discretion on a case-by-case basis. The scoring template used for this has free-text fields to capture the justification for each scoring decision. These are also validated by a second assessor. This method may also allow for a composite score of two sources that individually do not pass the protocol.

Qualitative sources

A risk that the protocol excludes potentially useful non-official sources of qualitative information. This first iteration focuses on non-official sources of statistical information. Qualitative sources also have value, and we will be considering how this protocol might be developed to allow for a similarly balanced assessment of benefits and risks. We welcome stakeholders’ views on this.

Back to table of contents6. Future development

Stakeholders across the Government Statistical Service (GSS) have been consulted on this first iteration of the protocol.

We recognise there may be scope for improvements and welcome feedback. Drawing on this feedback, usage, and wider experience, we are likely to produce further iteration(s). Substantial changes and developments will be made available as separate publications. Minor changes and refinements will be announced on the platform’s updates page.

Potential developments may include refining the scoring dimensions and similar assessment for non-official sources of qualitative data, such as journal articles which could help to fill outstanding headline or disaggregation gaps.

Our user engagement feedback also suggests that there is significant demand for additional contextual information. Non-official qualitative sources could play a useful role for providing further insight for certain indicators. We intend to publish a consultation draft on this once the project is under way, and we welcome feedback.

Back to table of contents