Table of contents

1. Disclaimer

These Research Outputs are not official statistics relating to the labour market. Rather, they are published as outputs from research into an alternative prototype survey instrument (the Labour Market Survey (LMS)) to that currently used in the production of labour market statistics (the Labour Force Survey (LFS)).

This technical report should be read alongside the accompanying comparative estimates report and characteristics report to aid interpretation and to avoid misunderstanding. These Research Outputs must not be reproduced without this disclaimer and warning note.

Back to table of contents2. Introduction

As part of the Office for National Statistics (ONS) and UK Statistics Authority (UKSA) Business Plan for April 2019 to March 2022, the Census and Data Collection Transformation Programme (CDCTP) is leading an ambitious programme of work to put administrative data at the centre of the population, migration and household statistical systems. This programme of research is underpinned by the Digital Economy Act 2017, which allows the ONS to access data directly from administrative and commercial sources for research and statistical purposes for the public good. In addition to administrative data, there will also remain a need for some residual survey data collection, which the ONS intends to be “digital by default”. In the context of household surveys, this means providing an online self-completion mode to respondents (as well as face-to-face and telephone collection). An online mode will enable respondents to provide data at their own convenience, reduce respondent burden and reduce operational costs.

Up until very recently, data collection for household surveys has not included digital collection. However, since November 2019, the Opinions and Lifestyle Survey has included an online mode. The vast majority of household survey data collection is conducted by face-to-face interviewers who interview respondents in their own homes. Therefore, as part of the CDCTP, the Social Survey Transformation (SST) Division has been looking at the end-to-end survey designs for the current household survey portfolio. This includes the Labour Force Survey (LFS) and the feasibility of moving household survey data collection to a default online mode of collection. We expect that the face-to-face and telephone modes would be offered to households that do not complete online.

To collect labour market data via an online-first collection design, a new prototype product is being developed called the “Labour Market Survey” (LMS). The LMS is a mixed-mode survey that focuses on the core data collection requirements needed to produce labour market estimates. It is anticipated that after the integration of administrative data into the estimation system for labour market statistics, the LMS could replace the LFS and be the instrument used to collect any residual survey data requirements. The LMS would also be used to collect socio-demographic variables, which would allow the survey data to be linked to the administrative data sources. The design of the LMS is still in development and, as such, is currently a prototype. It is based on the design of the LFS, but there are some differences between the two products, which this report will detail.

Back to table of contents3. Approach to questionnaire design

The existing Labour Force Survey (LFS) is designed for face-to-face and telephone capture modes. With the introduction of an online mode for the Labour Market Survey (LMS), it is not possible to simply translate the existing LFS questionnaire design and flow to this new mode. Initial research demonstrated that the content is not suitable for self-completion as it was developed for interviewer-led collection. As a result, a transformative approach has been taken to the development of the LMS, which makes it significantly different to the LFS.

We began developing the questionnaire by understanding the concepts in the core labour market framework and identifying the data requirements of these concepts. The approach to the development of the wording and flow of the questions used to capture these concepts was a departure from the way that questionnaires have traditionally been designed at the Office for National Statistics (ONS). By adopting a respondent-centred design, the focus of the design effort was moved to the respondent user. It ensured that the terms were respondent-friendly (for example, recycling the language that they use to describe their circumstances in the question wording) and adapted the questionnaire flow to meet the “mental model” of a concept. The mental model approach provided information on the thought processes of respondents as they interpreted labour market concepts, leading to an understanding of the beliefs and thoughts they had on the topics they were being asked to consider. These designs aimed to develop a questionnaire that the respondent could identify with while still gathering accurate data to meet the requirements of users of the LMS’ data output.

To design and deliver in this way required an extensive qualitative design research programme, involving over 1,000 members of the public (to date) to iteratively design the questions to meet the specified data needs of users of the core LMS. There were four phases to the qualitative redesign – Discovery, Alpha, Beta and Live – following the best practice recommended in the GOV.UK service manual.

The Discovery phase

The Discovery phase involved gathering the data needs of users for each variable, based on the ONS labour market framework. Each core data requirement was discussed with users with the aim of determining the purpose of the analysis and original question. This process enabled the development of testing guides and research plans to explore the core of these concepts with the public and ONS field interviewers.

Insight sessions were then conducted with ONS interviewers to discuss the current data collection process to learn details about what was, or was not, working well with the questionnaire. Observations of live data collection with interviewers also took place for existing questions to evaluate how they performed in the field first-hand.

The third step of the Discovery phase was to interrogate data already available such as the current LFS questionnaire. This enabled inefficiencies in the questionnaire flow to be identified as well as opportunities to modify routing to improve the respondent experience. For example, questions that LFS data demonstrated were only applicable to a small number of respondents were moved to more appropriate sections of the questionnaire.

Finally for Discovery, in-depth interviews were conducted with members of the public on certain topics to learn about their mental model for understanding the concepts being measured. Once this was completed, user stories that documented user needs were developed along with an Alpha phase research plan.

The Alpha phase

In the Alpha phase, a series of prototype questions were developed. Prototypes were developed primarily for mobile devices (that is, smartphones or tablets) to accommodate smaller screen sizes. This was done to create leaner questions and reduce the opportunity to add in on-screen information, in turn forcing the design challenge to be addressed in an innovative way.

The approach taken to the questionnaire design was termed “optimode” development (that is, optimised by mode). Each prototype question was optimised for each mode (online, face-to-face and telephone). Questions were developed for completion both in person and by proxy. Each question in the test was designed for online completion first and tested in that mode. The online questionnaire was used as a basis and then optimised for the face-to-face mode. The research work then focused on testing that question in the alternative mode to discover where adaptations were required in order to optimise the question for the mode (to aid comprehension and delivery) and to meet the data needs of users.

ONS interviewers were also shown the questions before testing with the public, to obtain their professional opinion of the redraft. If the online version worked well in the face-to-face mode, then the question was left unchanged. The same approach was taken for the telephone version of the question, although telephone collection was not used for this test. Some questions were identical across all three modes and some were amended to suit the mode. However, all were optimised for the mode. Each prototype question was tested for readability using an online tool that checks the estimated reading ability required to comprehend the wording (expressed as a “reading age”). The overall question look or “pattern” was designed to be accessible to all users based on Government Digital Service (GDS) standards.

The Alpha phase also consisted of iterative testing where multiple versions of the questions were tested then edited based on research activity outcomes. The LMS question prototypes were tested qualitatively with members of the public via in-depth interview and cognitive tests. The samples for these rounds were recruited through a recruitment agency that is widely used across the UK Government in the design of services, and they were targeted based on the research plan and required learning. The interviews covered both cognition and usability in the same session, as question layout can impact on comprehension. Up to 25 questions were included in a round to ensure that questions were asked in the correct context to aid comprehension.

The “mental model” concept was explored further in the Alpha phase to validate whether the changes made using the Discovery insights were accurate. Respondents were interviewed to learn about how they understood, processed and responded to the questions they were being asked to consider. Interviews were transcribed and analysed using thematic analysis techniques to identify common themes and issues. The prototypes were then iteratively redesigned, and the testing cycle was repeated at least three times:

test one aimed to test the initial draft

test two aimed to test the changes from test one insights

test three explored whether the changes had fixed the issue

On occasions where the issue still existed after test three, the questions were integrated into subsequent rounds to refine them further.

The Beta phase

Once this process was complete, the question design moved into the Beta phase. This involved quantitative testing using a large sample, incorporating the operational design for communications with users. Two previous quantitative tests of the LMS have taken place, and the outcomes informed the development of the LMS Statistical Test. We analysed paradata detailing how users interacted with the prototype questionnaire, patterns of access and response and data relating to where respondents “dropped out” of the questionnaire. Any issues that were encountered led to further Alpha and Beta testing. Section 4, “Quantitative testing to date”, provides an overview of the previous tests and links to the full reports.

A minimal set of questionnaire checks were included in this prototype LMS questionnaire, compared to the LFS, which uses checks to compare responses from one or multiple variables to others to support consistency. Logic checks such as invalid dates and preventing alpha characters being input into numeric fields were included, but more detailed and specific checks such as ensuring consistency in the household relationships (for example, a grandfather and granddaughter are coded correctly) were not included. There were no questions that were “hard checked” and had to be completed; any question could be bypassed without answering. This was by design to determine how a minimal set of checks and skippable questions would influence completion rates and respondent journeys. If respondents do not have to move back and forth through the questionnaire to revise their answers when they are deemed by checks to be “invalid”, then it should reduce the likelihood of respondents dropping out of the survey. However, this will need to be balanced against the quality of the data produced and if checks are needed to resolve such issues, then their use will need to be explored. This approach will be evaluated using the test paradata and may be revised for future testing.

Back to table of contents4. Quantitative testing to date

The two previous quantitative tests have been used to test a number of experimental conditions and mainly provided operational data towards the development of strategies for engaging with respondents using an online-first questionnaire. These tests complemented the qualitative testing by providing response data and paradata, but the designs were online only. As the survey designs for these tests were early prototypes, no statistical estimates were produced.

The first quantitative test in the series was conducted in July 2017 and was a single wave, online-only response rate test of an initial prototype Labour Market Survey (LMS). The test aimed to determine the effectiveness of different communication strategies and respondent materials (including letter content, envelope colour, postal days and envelope branding). The outcomes of the test provided a baseline online engagement rate of 19.5%, an indication of the most appropriate communications strategy to use in future tests and validated the approach that was being taken. Further details on this response rate test are available.

Subsequent to this, a second test conducted between September and October 2017 investigated different incentivisation strategies (including £5 or £10 unconditional vouchers, £5 or £10 conditional vouchers, and reusable canvas carrier bags). This test demonstrated that the cost-effective canvas bag incentive could produce a Wave 1 online engagement rate of 27.5%, and it further reinforced the effectiveness of the engagement strategy. Further details on this response rate test are available.

Back to table of contents5. The Labour Market Survey Statistical Test

The test detailed in this report builds upon the research and learning from the previous tests as well as the suite of qualitative research. This test is known as the “Labour Market Survey (LMS) Statistical Test” as it has been designed to collect data that will allow important labour market statistical estimates to be produced. This will be the first instance in which the prototype LMS survey has be used to produce such estimates and is a significant step towards the transformation of the Labour Force Survey (LFS). This test uses a mixed-mode approach (online and face-to-face) and marks the first time the Office for National Statistics (ONS) has tested such a mode combination at scale for a household survey. The test covers Wave 1 collection only and does not replicate the longitudinal five-wave structure of the LFS, which will be tested at a later date.

The objectives of the test were:

to measure the online engagement rate and mixed-mode response rates

to produce important labour market estimates using a prototype new survey data source (see LMS comparative estimates report)

to compare the estimates to the existing data source (that is, the LFS) (see LMS comparative estimates report)

to compare the socio-demographic characteristics of responding households or individuals (see LMS characteristics report)

to evaluate all aspects of the test (including design, operations and results) and incorporate the results into future research and testing cycles

Data collection was outsourced to Ipsos MORI, which hosted and programmed the online questionnaire based on a specification provided by the ONS and supplied the field force for the face-to-face interviews.

Back to table of contents6. Sample design

The sample for addresses in England and Wales was drawn from AddressBase, an Ordnance Survey or GeoPlace product comprised of local authority, Royal Mail and Council Tax data, available to the Office for National Statistics (ONS) under the Public Sector Mapping Agreement. This product will have future use in sampling ONS address-level surveys such as the census or social surveys. Currently, the Postcode Address File (PAF) is used as the sampling frame for social surveys – this is a list of all addresses to which Royal Mail deliver mail. The version of AddressBase used in this test did not contain data on addresses in Scotland; therefore, the PAF was used as the sampling frame for Scottish addresses.

A proportional sample of 14,149 addresses was drawn from across England (12,174), Scotland (1,258) and Wales (717) using a stratified simple random selection process, similar to the process used for the Labour Force Survey (LFS). The sample did not include addresses in Northern Ireland where the Northern Ireland Statistics and Research Agency (NISRA) conducts the LFS. Future tests are likely to include a sample of addresses in Northern Ireland. The target population of the Labour Market Survey (LMS) was based on the population of Great Britain who are resident in private households. Unlike the LFS, residents in NHS accommodation, young people living away from home in student halls of residence or other similar institutions, and addresses north of the Caledonian Canal were excluded from the sample for this test of the LMS. This is just for the purposes of this particular test, rather than a design feature of the LMS. In the longer term, the LMS would include these addresses in its sample.

Each interviewer area on the LFS is split into 13 “stint” areas, with each stint allocated to a specific week in a quarter. The LFS stints of Great Britain, excluding those north of the Caledonian Canal in Scotland, formed the list of primary sampling units from which the LMS Statistical Test sample was selected. There are 2,691 LFS stints in scope; 394 stints were selected at random using systematic probability proportional to size, where size is defined as the number of post office delivery points.

In each stint, 36 addresses were selected using systematic random sampling from the prototype AddressBase product. Addresses sampled by the ONS for surveys within the previous two years were excluded from the sampling frame. The design of the LMS is hence a two-stage clustered design where all addresses have the same probability of selection into the sample. The selected clusters were assigned at random to the weeks of the selected quarter, allocating an equal number of clusters to each week.

Table 1 demonstrates the composition of the LMS test sample by country, and by region in England. Addresses in England comprised 86.1% of the sample; 8.9% of sampled addresses were in Scotland and 5.1% were in Wales. The sample for England was proportionally drawn from each region.

| Total issued households | Proportion of total sample (%) | |

|---|---|---|

| England | 12,174 | 86.1 |

| North East | 645 | 4.6 |

| North West | 1,670 | 11.8 |

| Yorkshire and the Humber | 1,148 | 8.1 |

| East Midlands | 1,130 | 8.0 |

| West Midlands | 1,224 | 8.7 |

| East | 1,364 | 9.6 |

| London | 1,729 | 12.2 |

| South East | 1,899 | 13.4 |

| South West | 1,365 | 9.6 |

| Wales | 717 | 5.1 |

| Scotland | 1,258 | 8.9 |

| Total | 14,149 | 100.0 |

Download this table Table 1: Labour Market Survey sample composition by country and region

.xls .csvThe definition of an “ineligible” address for this LMS Statistical Test was consistent with the definition used for the LFS. Properties that were vacant, demolished or under construction were not eligible for the test, nor were communal establishments or institutions, holiday or second homes, and non-residential addresses such as businesses. Overall, 94.9% of addresses sampled were eligible for participation in the test.

Back to table of contents7. Prototype questionnaire content

The content of the prototype Labour Market Survey (LMS) questionnaire has been developed from the core requirements specified by the Labour Market and Households Division in the Office for National Statistics (ONS), based on the ONS framework for labour market statistics, which has been developed in accordance with International Labour Organization (ILO) principles. The framework is based on the concepts of supply and demand in the labour market; the “supply” aspect consists of those people defined as being employed or those who are unemployed or economically inactive but can be considered as potential labour supply. The “demand” aspect relates to employers that require the work to be done. The prototype LMS has content based on the “supply” side of the framework, and this test covers the “core” requirements only.

The “core” requirements collected as part of this test related to demographic information for all household members; individual demographics such as date of birth, nationality and marital status; individual employment such as employment or unemployment status and hours worked; and highest educational qualification. For the purposes of this test, all core requirements have been collected using the survey instrument – the future vision for social surveys is that administrative data will form the basis of the data source and the survey element will capture residual requirements and data linkage variables. Research is continuing into the use of administrative data sources, and this test does not incorporate any administrative data – this will form the basis of future testing and research. The full list of questions for this test can be found as accompanying documentation with the LMS comparative estimates report. This test collected data that allow the derivation of the following important labour market variables:

ILODEFR and INECAC – economic activity

CURED – current education received

REDUND – whether made redundant in last three months

DURUN – duration of ILO unemployment

SECJMBR – whether has a second job or status in second job

SUMHRS – total actual hours worked in main and second job(s)

The questionnaire content for this test does not represent the final content of the proposed LMS. The content is a prototype that has been, and continues to be, developed iteratively based on the evaluation of testing and further research. The survey design used in this test will be re-evaluated based on the outcomes of the test and will be iteratively improved upon. The ONS labour market framework covers additional labour market requirements that this test did not capture, for example, temporary work, guaranteed minimum hours and expanded self-employment. As per the ONS strategy, work is ongoing to determine if administrative data sources can be used to provide this data and, where it is not possible, residual survey data collection will be investigated.

Back to table of contents8. Rolling reference week

In order to reduce potential recall bias, the Labour Market Survey (LMS) Statistical Test utilises a “rolling reference week” for the labour market content, compared to the “fixed reference week” used for the Labour Force Survey (LFS).

The “rolling reference week” is defined as the week prior to the date on which a household started to complete either the online or face-to-face survey; this is an automatic process performed by the collection instrument. Once the rolling reference week for a household has been defined, it remains static; if a household returns to the survey at a later date to enter further data, the reference week remains unchanged.

A “fixed reference week” is a reference week for which the date has been pre-determined prior to the start of the data collection. The reference week is again determined by the collection instrument, but it will be to a fixed week within the collection month. This is the type of reference week used in the LFS.

The use of a rolling reference week was put in place to aid respondent recall, based on evidence from the qualitative testing process; if the reference week is closer to the collection date, then respondents are more likely to recall the information they are being asked to provide, thereby reducing the risk of recall bias and error. This is particularly applicable with the eight-week data collection period used in this test, which may have introduced more recall bias or error if respondents were asked to recall their labour market activities many weeks previous.

The distribution of the rolling reference week is subject to more variation than a fixed reference week, particularly with the introduction of the online mode, as respondent behaviour can influence the allocation of the sample to each particular week. For example, during holiday periods, the distribution for that reference week may be lower since respondents are unavailable to complete, but for the following week it could be higher, when respondents are available. To account for such variations, the distribution is incorporated into the weighting process (detailed in the LMS comparative estimates report).

Back to table of contents9. Engagement strategies

The previous online-only Labour Market Survey (LMS) tests have provided evidence towards the optimal engagement strategy for an online-first survey. This evidence-based approach was used to define the materials, content and mailing strategy for this mixed-mode test.

All sampled addresses were sent a pre-notification letter that included details informing respondents that they had been sampled to take part in a social survey and that an invite letter would be arriving in the coming days. This initial communication also included information on how to find out more about the survey by going online or contacting the Office for National Statistics (ONS) Survey Enquiry Line (SEL). Letters were sent by second-class post and dispatched on a Wednesday, with the expectation that they would be delivered either on the Friday or Saturday of that week.

The invite letters were sent one week after the pre-notification letter and included instructions for respondents on how to complete the survey. This involved going to the URL, www.ons.gov.uk/takepart (the landing page) and clicking a “Start Now” button. Respondents were then directed to a website where they could enter a 12-digit, numeric-only unique access code (UAC) to access the survey. Each invite letter contained a UAC that was associated with the sampled household only. The invite letter also contained an unconditional incentive for each sampled address – the incentive was a “tote bag”, a reusable bag made from canvas that had a graphic representing statistics produced by the ONS on one side, printed in colour. This type of incentive is unique to the LMS and is part of the test; it is not currently in use for the Labour Force Survey (LFS) or any other social survey.

A reminder letter was sent to all addresses that had not accessed the online survey after five days (that is, the Monday after the invite letter was sent). This letter was sent on a Tuesday, second class, to arrive on the Thursday of that week. The timeline of the engagement strategy in operation is:

T minus 9 days – pre-notification letter for the online survey is dispatched (Wednesday)

T minus 2 days – invite letter for the online survey, including the UAC, is dispatched (Wednesday)

T – online data collection starts (Friday)

T plus 4 days – reminder letter is dispatched (Tuesday)

T plus 11 days – face-to-face data collection starts (Tuesday)

10. Data collection operations

The Labour Market Survey (LMS) Statistical Test was mixed mode – online first, with a face-to-face follow up for non-responding addresses. Collection of the data for both modes was outsourced to Ipsos MORI, which provided the Computer-aided Web Interview (CAWI) online hosting and questionnaire, and the Computer-aided Personal Interview (CAPI) data collection for which Ipsos MORI provided field interviewers. The Office for National Statistics (ONS) Survey Enquiry Line (SEL) provided a help and advice service for respondents.

The first two weeks of data collection for each cohort were exclusively for online data collection. There were no restrictions on how often a household could use their unique access code (UAC) to access their online questionnaire during the data collection period. For security and data protection, the questionnaire was “locked” when a household exited the survey either upon completion, when they closed their internet browser, or when their session ended because of a period of inactivity. If the UAC was then used to access the survey again, the respondent would be taken to the last question answered and could not return to previous answers; this was to prevent data disclosure.

All sample addresses that had not accessed the survey after two weeks, were not indicated to be ineligible properties, or had not contacted the SEL to refuse participation were issued to a field force supplied by Ipsos MORI for face-to-face data collection. The face-to-face collection period lasted for six weeks for each cohort; during this time, households could still access and complete the survey online. Interviewers made a maximum of four calls to each address in an attempt to conduct a face-to-face interview. This is fewer calls than on the Labour Force Survey (LFS), where interviewers can make up to 10 contacts.

The eight-week data collection period for the LMS is notably longer than that of the LFS, which is comprised of face-to-face collection for two weeks at Wave 1. This was primarily because of the multi-mode nature of the LMS and evidence from previous online tests that demonstrated that a two-week, online-only collection period would generate a high level of online take up. The residual face-to-face collection was extended to six weeks because of the outsourcing of the collection and the availability of interviewers in the sampled areas. The efficiency and suitability of the collection period for future testing will be reviewed based on the results from the Statistical Test.

After the allocated eight-week collection period for each cohort, both the online and face-to-face collection activity stopped and respondents could no longer access the online questionnaire.

Collection cohorts

| Cohort | Total issued households | England | Wales | Scotland |

|---|---|---|---|---|

| 1 | 935 | 763 | 52 | 120 |

| 2 | 933 | 817 | 56 | 60 |

| 3 | 962 | 829 | 35 | 98 |

| 4 | 903 | 784 | 37 | 82 |

| 5 | 905 | 777 | 73 | 55 |

| 6 | 964 | 804 | 71 | 89 |

| 7 | 934 | 831 | 34 | 69 |

| 8 | 936 | 823 | 38 | 75 |

| 9 | 953 | 827 | 37 | 89 |

| 10 | 911 | 787 | 34 | 90 |

| 11 | 930 | 766 | 55 | 109 |

| 12 | 938 | 814 | 53 | 71 |

| 13 | 936 | 828 | 36 | 72 |

| 14 | 1,007 | 866 | 53 | 88 |

| 15 | 1,002 | 858 | 53 | 91 |

| Total | 14,149 | 12,174 | 717 | 1,258 |

Download this table Table 2: Sample size for each cohort, by country

.xls .csvThe total sample of 14,149 addresses was split into 15 “cohorts”, with a new cohort issued each week over 15 consecutive weeks. This is demonstrated in Table 2. The choice of splitting the sample over 15 weeks, compared to the 13 weeks used for the LFS, was based on the size of the sample and the availability of field interviewers in each region.

The composition of each individual cohort was not a proportional representation of the sample; rather, the sample as a whole was proportionally representative by country and region. This was for operational reasons, to account for interviewer availability and the logistics of fulfilling the engagement strategy.

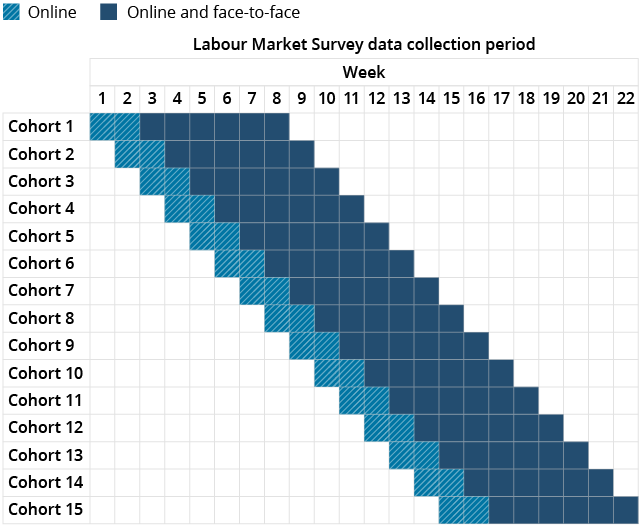

Figure 1: Data collection periods by cohort

Source: Office for National Statistics – Labour Market Survey Statistical Test

Download this image Figure 1: Data collection periods by cohort

.png (30.4 kB)Figure 1 demonstrates the collection periods for each cohort, including the two weeks in which data collection was exclusively online and the subsequent six weeks of mixed-mode, online and face-to-face collection.

Back to table of contents11. Response and engagement rates

The main findings were the engagement rate for the LMS test (that is the proportion of the households sampled that accessed the survey) was 60.7% overall; online engagement was 31.1% and face-to-face engagement was 29.6%.

The response rate (that is the proportion of the households sampled that provided usable data) was 56.5%. The online (28.4%) and face-to-face (28.1%) response rates were very similar.

The engagement rate is an important metric for online surveys as it provides data on the effectiveness of the engagement strategy. If households read the materials they are sent, access the website, enter their unique access code (UAC) but proceed no further, then it suggests that the communication strategy has been effective in getting such a household engaged to the point of viewing the survey. Further research will be performed to determine how such households can be encouraged to provide their data.

All engagement rates and response rates are based on an eligible sample proportion of 94.9%; of the 14,149 households sampled, 13,423 were eligible for the Labour Market Survey (LMS) Statistical Test. Ineligibility was determined by calls to the Office for National Statistics (ONS) Survey Enquiry Line (SEL), postal communications that were returned by the postal service, and assessments by face-to-face interviewers.

The overall engagement rate, defined as the proportion of households that either visited the survey website and entered their UAC to access the survey or provided any survey information to a face-to-face interviewer, was 60.7% (Table 3). Online engagement was 31.1%, while face-to-face engagement was 29.6%.

| Online N | Online % | Face-to-face N | Face-to-face % | Total N | Total % | |

|---|---|---|---|---|---|---|

| Engagement rate | 4,171 | 31.1 | 3,971 | 29.6 | 8,142 | 60.7 |

| Response rate | 3,818 | 28.4 | 3,770 | 28.1 | 7,588 | 56.5 |

| Full completions | 3,595 | 26.8 | 3,719 | 27.7 | 7,314 | 54.5 |

| Usable partial data | 223 | 1.7 | 51.0 | 0.4 | 274 | 2.0 |

| Unusable partial data | 353 | 2.6 | 201 | 1.5 | 554 | 4.1 |

Download this table Table 3: Engagement and response rates for the Labour Market Survey Statistical Test, household level

.xls .csvThe overall response rate, defined as the proportion of households that provided full data for at least one person resident in that household, was 56.5%. The online response rate was 28.4% and the face-to-face response rate was 28.1%.

The proportion of fully completing households (that is, those that provided full details for all residents) was 54.5%; for the online mode, 26.8% of eligible households fully completed and for face-to-face it was 27.7% of eligible households.

Partial completions were categorised in two ways: usable and unusable. Usable partial data include instances where at least one member of a household has completed all of their survey sections. Unusable partial data are defined as cases where no household members fully completed – this can range from households that entered their UACs online but entered no data, through to households where an individual member started to complete the survey but did not reach the end of the interview. The definition of a usable partial is different on the LMS to the Labour Force Survey (LFS). The LFS defines this as a household where at least one question block (that is, a series of related questions) has been completed. This definition for the LMS is not fixed and may change in future. As expected from the evidence from previous LMS testing, there is a higher proportion of partially completing households online (1.7% usable, 2.6% unusable) compared with face-to-face (0.4 usable, 1.5% unusable).

| Online N | Online % | Face-to-face N | Face-to-face % | Total N | Total % | Eligible households | |

|---|---|---|---|---|---|---|---|

| England | 3,669 | 31.7 | 3,337 | 28.8 | 7,006 | 60.5 | 11,577 |

| North East | 184 | 30.4 | 204 | 33.7 | 388 | 64.1 | 605 |

| North West | 451 | 28.2 | 503 | 31.5 | 954 | 59.7 | 1,597 |

| Yorkshire and the Humber | 335 | 30.9 | 348 | 32.1 | 683 | 63.1 | 1,083 |

| East Midlands | 355 | 32.6 | 290 | 26.6 | 645 | 59.2 | 1,090 |

| West Midlands | 324 | 27.8 | 373 | 32.0 | 697 | 59.9 | 1,164 |

| East | 433 | 33.6 | 337 | 26.2 | 770 | 59.8 | 1,288 |

| London | 462 | 27.8 | 449 | 27.0 | 911 | 54.8 | 1,662 |

| South East | 649 | 36.4 | 472 | 26.5 | 1,121 | 62.8 | 1,784 |

| South West | 476 | 36.5 | 361 | 27.7 | 837 | 64.2 | 1,304 |

| Wales | 178 | 26.7 | 203 | 30.5 | 381 | 57.2 | 666 |

| Scotland | 324 | 27.5 | 431 | 36.5 | 755 | 64.0 | 1,180 |

| Total | 4,171 | 31.1 | 3,971 | 29.6 | 8,142 | 60.7 | 13,423 |

Download this table Table 4: Engagement rates by country and region for the Labour Market Survey Statistical Test, household level

.xls .csvTable 4 demonstrates the engagement rates in England, Wales and Scotland and the engagement rate for each English region. Engagement was highest in the South West (64.2%) and North East (64.1%) of England and in Scotland (64.0%). The lowest engagement rates were in London (54.8%) and Wales (57.2%). London is traditionally a difficult area for obtaining responses, so this is not an unexpected result. Online engagement was highest in the South West (36.5%) and South East (36.4%) of England, and England was the country with the highest online engagement (31.7%). Face-to-face engagement was higher in Scotland (36.5%) and in the more northern areas of England such as the North East (33.7%), Yorkshire and The Humber (32.1%) and the North West (31.5%).

| Online N | Online % | Face-to-face N | Face-to-face % | Total N | Total % | Eligible households | |

|---|---|---|---|---|---|---|---|

| England | 3,365 | 29.1 | 3,165 | 27.3 | 6,530 | 56.4 | 11,577 |

| North East | 169 | 27.9 | 202 | 33.4 | 371 | 61.3 | 605 |

| North West | 421 | 26.4 | 452 | 28.3 | 873 | 54.7 | 1,597 |

| Yorkshire and the Humber | 312 | 28.8 | 347 | 32.0 | 659 | 60.8 | 1,083 |

| East Midlands | 329 | 30.2 | 277 | 25.4 | 606 | 55.6 | 1,090 |

| West Midlands | 289 | 24.8 | 351 | 30.2 | 640 | 55.0 | 1,164 |

| East | 392 | 30.4 | 322 | 25.0 | 714 | 55.4 | 1,288 |

| London | 402 | 24.2 | 420 | 25.3 | 822 | 49.5 | 1,662 |

| South East | 605 | 33.9 | 460 | 25.8 | 1,065 | 59.7 | 1,784 |

| South West | 446 | 34.2 | 334 | 25.6 | 780 | 59.8 | 1,304 |

| Wales | 161 | 24.2 | 199 | 29.9 | 360 | 54.1 | 666 |

| Scotland | 292 | 24.7 | 406 | 34.4 | 698 | 59.2 | 1,180 |

| Total | 3,818 | 28.4 | 3,770 | 28.1 | 7,588 | 56.5 | 13,423 |

Download this table Table 5: Response rates by country and region for the Labour Market Survey Statistical Test, household level

.xls .csvTable 5 demonstrates the response rates by country and English region. Overall response was highest in the North East of England (61.3%), Yorkshire and The Humber (60.8%), and Scotland (59.2%). Online response was highest in the South West (34.2%) and South East (33.9%) of England and in England overall (29.1%). This suggests that areas with a higher overall response rate contain a larger proportion of face-to-face responses than online responses. The results for the breakdown of engagement and response will be considered with regards to the implementation of future engagement strategies and how online response can be increased.

| Individual response status | LMS (%) | LFS (%) |

|---|---|---|

| Complete | 92.6 | 95.3 |

| Partial | 1.6 | 3.2 |

| Not started | 5.9 | 1.5 |

Download this table Table 6: Individual completions and partials, Labour Market Survey and Labour Force Survey

.xls .csvTable 6 shows the proportion of individuals that completed, partially completed or did not start the survey. Of individuals who were added to the household grid of the LMS, 92.6% completed, 1.6% partially completed (providing either usable or unusable data) and 5.9% did not start or access their individual survey. In comparison, the LFS had a higher completion rate (95.3%), a higher partial rate (3.2%) and a lower “not started” rate (1.5%).

| Online N | Online % | Face-to-face N | Face-to-face % | Total N | Total % | |

|---|---|---|---|---|---|---|

| Response provided by proxy | 1,424 | 19.8 | 2,495 | 34.9 | 3,919 | 27.3 |

| Response provided in person | 5,785 | 80.2 | 4,647 | 65.1 | 10,432 | 72.7 |

| Total | 7,209 | 100.0 | 7,142 | 100.0 | 14,351 | 100 |

Download this table Table 7: Proxy completion rates by mode, individual level (excludes those aged under 16 years)

.xls .csvProxy response data are data that are supplied on behalf of someone else, usually because the person in question is absent or unavailable to provide their data. Proxy responses only cover factual data; any opinion-based data are not collected. All data for individuals aged 16 years or under are collected by proxy. Both the LMS and LFS accept proxy responses. The LMS definition of a proxy response was different to the definition used for the LFS. The LMS did not place any restrictions on who could provide answers on behalf of another person. In comparison, the LFS requires proxy data to be provided by another person who is a member of their household, a carer or an English-speaking relative for non-English speakers.

Excluding those aged under 16 years, the LMS proxy rate was 27.3% overall (Table 7). For the online mode, the proportion was 19.8%; this is consistent with the previous LMS, which had a proxy rate of approximately 20%. The proportion of proxy responses for the face-to-face mode was 34.9%. There are a number of possible explanations for this – for example, the online self-completion mode allows households to provide their data at a time of their convenience. This means there is a greater opportunity for data to be provided when as many household members are available. By comparison, an interviewer in a face-to-face collection situation may need to make multiple visits (which is not always possible) and would have less opportunity to obtain an interview from everyone in person. Another possibility is that a proxy response is defined by the respondent in the self-completion online mode, whereas it is defined by the interviewer in the face-to-face mode. As a comparison, the proxy rate for the LFS (PDF, 1.4MB) is approximately 33%.

Back to table of contents12. Paradata

Paradata are data that relate to how the survey is collected, and they can provide valuable insight into the effectiveness of operations and information on how respondents interact with the survey.

| Device N | Device % | |

|---|---|---|

| Desktop | 4,707 | 49.8 |

| Smartphone | 1,670 | 17.7 |

| Tablet | 1,840 | 19.5 |

| Other or unknown | 1,228 | 13.0 |

| Total | 9,445 | 100.0 |

Download this table Table 8: Type of device used to access the online survey, individual level

.xls .csvParadata regarding the type of device used to access the online survey suggest that desktops were the most common device used (49.8%, Table 8). This is consistent with previous Labour Market Survey (LMS) tests and with other online social surveys. The use of tablets (19.5%) and smartphones (17.7%) was relatively similar in proportion; again, this is consistent with previous testing.

| Browser N | Browser % | |

|---|---|---|

| Chrome | 4,658 | 53.9 |

| Firefox | 406 | 4.7 |

| Internet Explorer | 660 | 7.6 |

| Safari | 2,871 | 33.2 |

| Other or unknown | 46 | 0.5 |

| Total | 8,641 | 100.0 |

Download this table Table 9: Type of browser used to access the online survey, individual level

.xls .csvTable 9 shows that the Chrome browser was used by most individuals (53.9%) who responded to the survey, followed by the Safari browser (33.2%). The main four browsers (Chrome, Firefox, Internet Explorer and Safari) comprised 99.5% of the browsers used.

| Question category N | Question category % | |

|---|---|---|

| Proxy check | 38 | 17.6 |

| Socio-demographic | 74 | 34.3 |

| Labour market | 76 | 35.2 |

| Other | 28 | 4.9 |

| Total | 216 | 100.0 |

Download this table Table 10: Question category for instances of online survey drop-out, individual level

.xls .csvSurvey “drop-out” refers to the point at which a respondent closes down their online survey without completing it and does not return to it. This is a challenge that online surveys need to overcome, and there are many reasons a respondent may drop-out. These include if a respondent finds a question difficult to complete, does not understand what they are being asked or the relevance of the question, is concerned about confidentiality, or cannot provide a valid answer. The user-centric design of the prototype LMS means that such issues should have been mitigated to an extent, but there were still instances of drop-out. Additionally, the prototype questionnaire allowed respondents to bypass any question they did not want to answer – again, an attempt to reduce drop-out. A total of 216 individuals started to complete the online survey but dropped out at some point during the data entry process.

Table 10 shows the type of question at which these individuals exited their session; individual question drop-out cannot be shown owing to low base numbers. The highest proportion of drop-outs occurred during the labour market questions (35.2%), closely followed by the socio-demographic questions (34.3%). The labour market questions are later in the survey than the socio-demographic and proxy checks, so this factor needs to be considered as a reason for drop-out. These data will be analysed further to determine why drop-outs occurred and how this can be reduced for future testing.

| Session count N | Session count % | |

|---|---|---|

| 1 | 8,287 | 95.5 |

| 2 | 137 | 1.6 |

| 3 | 213 | 2.5 |

| 4 | 10 | 0.1 |

| 5 or more | 30 | 0.3 |

| Total | 8,677 | 100.0 |

Download this table Table 11: Number of instances of accessing the online survey, individual level

.xls .csvThe online survey allowed households or individuals to access the survey multiple times, potentially using different devices; this was implemented so those who had to exit the survey without completing could return at a future date to enter further data. Table 11 demonstrates that the vast majority of individuals (95.5%) who fully completed the survey did so in a single session. This is consistent with previous LMS tests.

Back to table of contents13. Summary

This technical report for the Labour Market Survey (LMS) Statistical Test provides an overview of the prototype survey design and details on the engagement, response and paradata results. It is not indicative of the final design of the LMS, which will be refined based on the outcomes from this test, ongoing research and future testing. The engagement and response results have demonstrated that a transformed prototype LMS can achieve an online engagement rate of 31.1% and an online response rate of 28.4%, and the findings from the test can be used to determine if the engagement rate can be increased and more households can be encouraged to respond fully.

Back to table of contents